C&L - 20/04/26

The past experiments were about again trying to have a purely diffusion based approach - of the marigold adjacent diffusion architecture - converge towards a reasonable metric depth estimator.

The past experiments were about again trying to have a purely diffusion based approach - of the marigold adjacent diffusion architecture - converge towards a reasonable metric depth estimator.

The second half of March was about one core question: can we extend Mari from predicting just RGB & depth to also predicting visibility (uncertainty), and does that improve depth estimation in fog? This post walks through how the experiments progressed — from a major code refactor, through training the new uncertainty head, to comparing results against baseline Marigold.

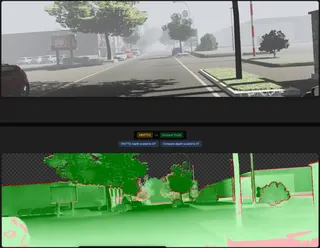

This week was mostly about evaluating the current model, comparing it naiively with Marigold - always scale&shifting the relative depth estimation output of both models to be comparable with the metric ground truth.

Last two weeks were spent with lots of experiments on the RDS dataset and MERGE architecture.Status quo was:- Dehazing via SD2.1 works well- Novelty from MERGE: Use Marigold adjacent architecture with dual-converters ("prediction heads") for image generation and depth maps.- Early MERGE experiments worked well on VKITTI2 (352 x 1216 RGB)- Extend tests to real-drive-sim

As a placeholder, we name the model we will train as:

We will use UniDepthV2 as a basline to compare: